Web Services: Accessing Daymet Data

Web services allow direct browser viewing and/or file download from a browser URL using defined parameters. Understanding these services allows a user to query, subset, and automate machine-to-machine downloads of data. Two Web Services are described that demonstrate:

- Automating downloads of gridded subsets

- Single Pixel Extraction Tool File download automation

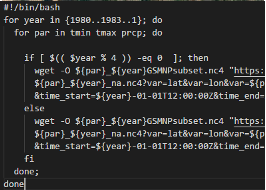

Scripts to Automate Gridded Subsets of Daymet Data

The NetCDF Subset Service (NCSS) uses a RESTful API that allows subsetting of netCDF datasets in coordinate space. These interactive requests can be automated through programmatic, machine-to-machine requests which involve the construction and submission of an HTTP request through an extended URL with defined parameters.

Batch Downloads![]() - Example scripts to automate subset downloads

- Example scripts to automate subset downloads

Understanding the NetCDF Subset Service (NCSS)

THREDDS instances are available that point to the Daymet North American dataset, 2-degree x 2-degree tile data, or pre-derived annual and monthly climatologies. Through the THREDDS data server, there is an integrated netCDF Subset Service (NCSS) using a REST API that allows for subsetting of netCDF datasets in coordinate space. Gridded data subsets are returned in CF-compliant netCDF formats.

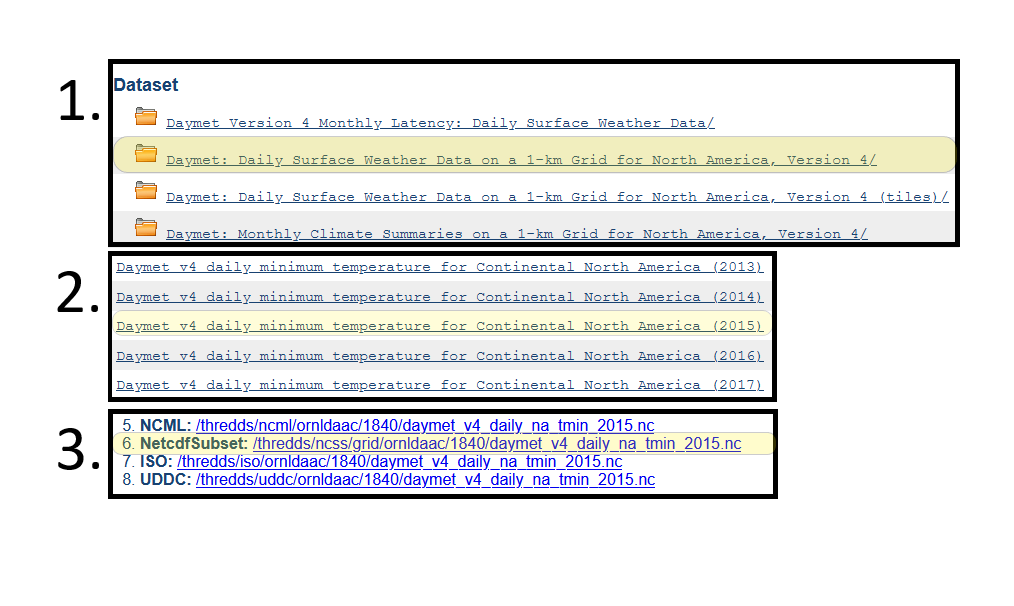

Explore the netCDF Subset Service with an example file through this series of links:

- Open THREDDS for Daily Weather Data

- Click the file Daymet daily minimum temperature for na (2015)

- Click the Netcdf Subset

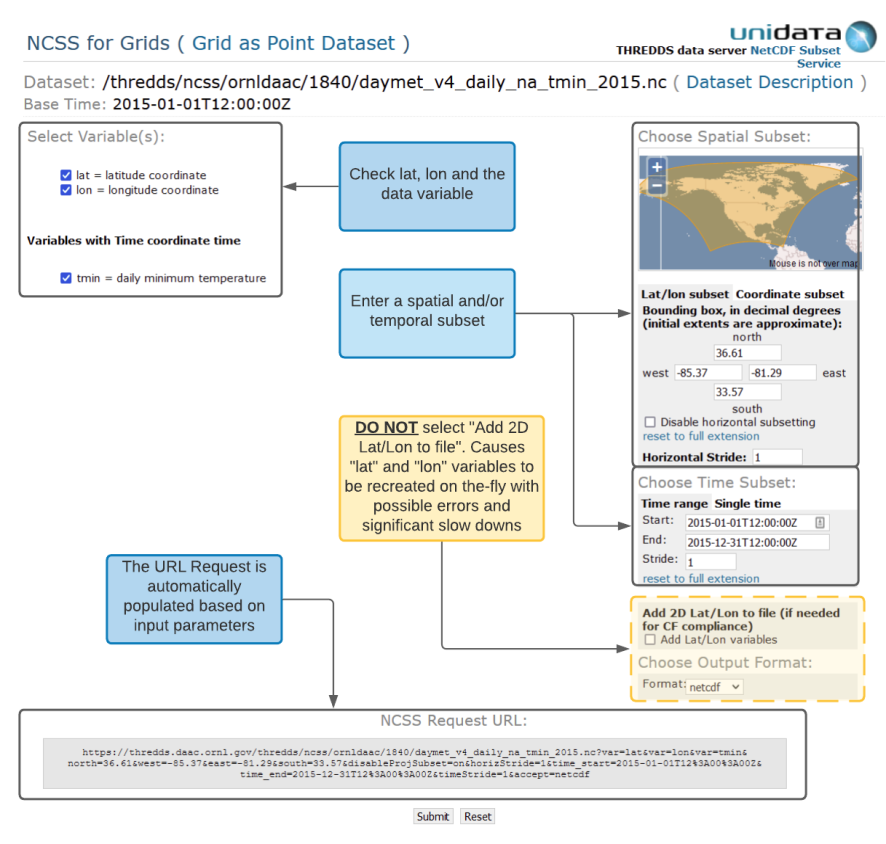

A GUI interface is shown in which you can enter spatial and temporal subsets. As the subset parameters are entered, a NCSS Request URL is updated.

Evaluating the URL request

https://thredds.daac.ornl.gov/thredds/ncss/ornldaac/1840/daymet_v4_daily_na_tmin_2015.nc?var=lat&var=lon&var=tmin&north=36.61&west=-85.37&east=-81.29&south=33.57&disableProjSubset=on&horizStride=1&time_start=2015-01-01T12%3A00%3A00Z&time_end=2015-12-31T12%3A00%3A00Z&timeStride=1&accept=netcdf

The query from the above GUI generates the NCCS Request URL.

It’s possible to simply copy and paste this URL into a web browser to issue the NCSS subset request; changing the spatial and/or temporal variable ranges in each request or changing the queried netCDF file. However, further automation is possible with a downloading agent such as wget using the batch script example linked above.

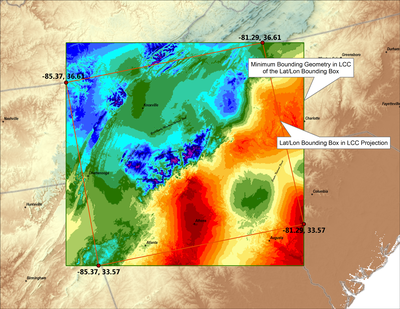

A note about the lat/lon subset and Daymet projection system

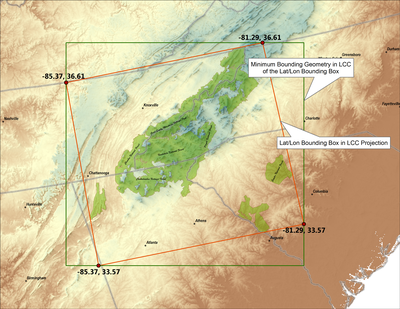

The Daymet dataset is defined in a Lambert Conformal Conic (LCC) Projection system. It is therefore necessary for the NCSS subset tool to find a minimum bounding area that encompasses the input geographic lat/lon subset Bounding Box within the corresponding LCC projected system. This minimum bounding area is used to subset the Daymet data. The resulting output file will be square in the LCC projection, with the corners estimated by the minimum bounding area of the input lat/lon coordinates.

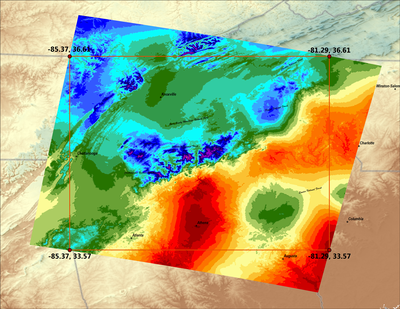

In the example below, a bounding box that includes the Smoky Mountain National Park and surrounding National Forests is input in latitude/longitude. In a geographic coordinate system (fig 1a) the bounding box is square. In LCC projected coordinates (fig 1b) the geographic bounding box is shown in the LCC projection (the red box). Also shown in fig 1b is the minimum bounding area that includes the lat/lon subset coordinates. One time slice of the resulting subset Daymet minimum temperature netCDF data file is displayed in a geographic coordinate system (fig 2a) and in the Daymet LCC projection (fig 2b).

Scripts to Automate Single Pixel Data Extraction

A Daymet Single Pixel Extraction Web Service API is provided based on REST URL transfer architecture. This web service allows the following functions:

- Browser viewing (both table and graph form)

- CSV file download of the data for lat/lon locations directly provided from the browser URL

- CSV file download through command utilities such as Wget and cURL

Batch Downloads - Batch Download Examples![]()

A GitHub repo of methods (Bash, Java, and Python) for automating the download of multiple locations of the Daymet Single Pixel data.

Visit the OpenAPI documentation page to learn more about using Daymet Single Pixel Extraction Web Services.

See the Daymet Learning page for Community Contributed Scripts and other useful resources.

Tool Citation: Thornton, M.M., and R. Devarakonda. 2011.

Daymet Single Pixel Extraction Tool. ORNL DAAC, Oak Ridge, Tennessee, USA.

https://doi.org/10.3334/ORNLDAAC/2361

Understanding the Single Pixel REST URL

The Daymet Single Pixel Extraction Web Service API is provided based on REST URL transfer architecture. This web service allows browser viewing (both table and graph form) or CSV file download of the data for lat/lon locations directly provided from the browser URL. CSV file download is also possible through command utilities such as Wget and cURL.

https://daymet.ornl.gov/single-pixel/api/data?lat=Latitude&lon=Longitude&vars=CommaSeparatedVariables&years=CommaSeparatedYears

OR

Example REST URL:

https://daymet.ornl.gov/single-pixel/api/data?lat=Latitude&lon=Longitude&vars=CommaSeparatedVariables&start=StartDate&end=EndDate

Latitude (required): Enter single geographic point by latitude, value

between 52.0N and 14.5N.

Usage Example: lat=43.1

Longitude (required): Enter single geographic point by longitude, value

between -131.0W and -53.0W.

Usage Example: lon=-85.3

CommaSeparatedVariables (optional): Daymet parameters include minimum

and maximum temperature, precipitation, humidity, shortwave radiation, snow water equivalent, and

day length.

Abbreviations:

- tmax - maximum temperature

- tmin - minimum temperature

- srad - shortwave radiation

- vp - vapor pressure

- swe - snow-water equivalent

- prcp - precipitation

- dayl - daylength

Usage Example: vars=tmax,tmin

All variables are returned by default.

CommaSeparatedYears (optional): Current Daymet product (version 3) is

available from 1980 to the latest full calendar year.

Usage Example: years=2012,2013

Years takes higher precedence over dates.

StartDate & EndDate (optional): Current Daymet product (version 3) is

available from 1980 to the latest full calendar year. Date elements follow ISO 8601 convention: YYYY-MM-DD

Usage Example: start=2012-01-31&end=2012-03-31

Wget and cURL

Wget and cURL are simple-to-use command line tools for downloading files. Wget returns an ASCII text CSV file. cURL returns data to the terminal, but the output can be piped into a file.

From the command line, execute:

$ wget 'https://daymet.ornl.gov/single-pixel/api/data?lat=Latitude&lon=Longitude&vars=CommaSeparatedVariables&years=CommaSeparatedYears'

or

$ curl 'https://daymet.ornl.gov/single-pixel/api/data?lat=Latitude&lon=Longitude&vars=CommaSeparatedVariables&years=CommaSeparatedYears'

| example wget command | $ wget 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&vars=tmax,tmin&years=2012,2013'

|

| all parameters | $ wget 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&years=2012,2013'

|

| one parameter, all years | $ wget 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&vars=prcp'

|

| one parameter, one month | $ wget 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&vars=prcp&start=2012-01-01&end=2012-01-31'

|

| example curl command | $ curl 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&vars=tmax,tmin&years=2012,2013'

|

| all parameters | $ curl 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&years=2012,2013'

|

| one parameter, all years | $ curl 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&vars=prcp'

|

| one parameter, one month | $ curl 'https://daymet.ornl.gov/single-pixel/api/data?lat=43.1&lon=-85.3&vars=prcp&start=2012-01-01&end=2012-01-31'

|

Browser View

Data can be viewed in any web browser. In the address bar, type:

https://daymet.ornl.gov/single-pixel/preview?lat=Latitude&lon=Longitude&vars=CommaSeparatedVariables&years=CommaSeparatedYears

| example URL | https://daymet.ornl.gov/single-pixel/preview?lat=43.1&lon=-85.3&vars=tmax,tmin&years=2012,2013

|

| all parameters | https://daymet.ornl.gov/single-pixel/preview?lat=43.1&lon=-85.3&years=2012,2013

|

| one parameter, all years | https://daymet.ornl.gov/single-pixel/preview?lat=43.1&lon=-85.3&vars=prcp

|